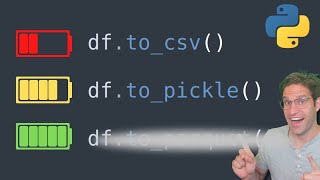

Speed Up Your Pandas Dataframes

HTML-код

- Опубликовано: 22 май 2024

- In this video Rob Mulla teaches how to make your pandas dataframes more efficient by casting dtypes correctly. This will make your code faster, use less memory and smaller when saving to disk or a database.

Timeline:

00:00 Intro

00:47 Imports and Data Creation

02:32 Dataframe Memory Use

03:20 Baseline Speed Test

04:15 Casting Categorical

05:45 Downcasting Ints

07:07 Downcasting floats

08:15 Casting Bool Types

09:15 Benchmark Comparison

11:08 Outro

Thanks for taking the time to watch this video. Follow me on twitch for live coding streams: / medallionstallion_

Speed up Pandas Code: • Make Your Pandas Code ...

Intro to Pandas video: • A Gentle Introduction ...

Exploritory Data Analysis Video: • Exploratory Data Analy...

* RUclips: youtube.com/@robmulla?sub_con...

* Discord: / discord

* Twitch: / medallionstallion_

* Twitter: / rob_mulla

* Kaggle: www.kaggle.com/robikscube

#python #code #datascience #pandas

That's a brilliant way to save memory & computational cost. Thanks Rob ! it was very useful.👍

Exactly! Casting the correct column types is very important to speeding up your code.

I really predict that this guys channel is gonna grow a lot.The content is pure without any bs and straight to point with actually new info

Thanks for the feedback. I hope you’re right.

Listen Rob, i came across your channel pretty randomly and your content is pure gold! straight to the point, and professionally presented! Thanks a lot! keep rocking

I love all the videos with these tricks that are critical in the daily developer activities! thanks so much

Thanks Filippo

Wow, this is one of the things that we rarely encounter in courses and yet the impact matters so much for overall efficiency. Thank you for making these types of videos. Wish this channel the best!

Thanks for the positive feedback. Totally agree that some of these specific details might not be covered in school but are great at speeding up your code and pipelines!

@@robmulla it depends very much on the amount of data. Essentially you are making a trade off between faster and more efficient code and developing time. If you work with relatively small amounts of metadata and quickly want to get something done, this might be too much of a hassle. But if your code goes to production and has to go through massive amounts of data then it's certainly worth it.

@@dirk-jantoot1029 Good point. There is always a tradeoff between time spent on implementation and code speed, but it's still best practice to properly set column dtypes.

this is incredibly useful information and explained nicely! subbed

Thanks for the feedback

It's just wow! Beginner like me really appreaciate your video. Keep it up my man.

Glad it was helpful to you. Thanks for the feedback.

Very nice videos mate! Thanks for sharing your knowledge with us

Glad you like them!

This note in the docs goes into detail about how categorical values only reduce memory use when the number of unique values are low: pandas.pydata.org/pandas-docs/stable/user_guide/categorical.html#categorical-memory

What would you recommend is a good formula for determining when it should be categorical vs simple string? Unique values < 50% df length?

Dude, where were you when I was starting out... you would have saved me hours of struggle. Great content. Please keep it coming.

So glad I could help you out Edmund! Share the channel with anyone else you think might also find it helpful.

That's brilliant! Thanks, Rob!

Thx Rob, really enjoyed this episode 👍🏼

Thanks!

I'm surprised how much info I learnt from this video, really good work!

Glad it was helpful! Check out my other videos and share it with some friends!

@@robmulla I will for sure! :)

Thanks Rob, for sharing such a great concept.

Thanks for watching Rakesh.

this is life changing

Fantastic Video, definitely using this in my daily work.

Glad you like it! I hope to continue to create more videos like this in the future.

Great explanation. Just found out about that a while ago and wish I would have seen this video first instead of doing a bunch of googling. Was trying to get a 36M record dataset with categoricals and positional data to fit in memory on an average laptop for a mapping application. Recasting the datatypes made it all work out.

Glad you enjoyed the video! Casting dtypes correctly is really helpful but easy to overlook

Thanks Rob! for making this useful video

Glad you found it useful. Thanks for watching.

Nice vid! Glad I ran into your channel...subscribed!

Thanks so much for the feedback! Hope you enjoy the other videos too.

Thank you for making this video.

My pleasure! Thanks for watching.

Perfect !

Thanks!

Appreciate the super thanks!!

helpful video !

Thanks it usefull , will apply in my project

Glad to hear you found it useful Rajesh!

awesome!!

Thanks Akshat

I deal with stock data for my job a lot- dealing with data frames that have daily data for 3000 companies across 20+ years means dealing with 16+ million rows. These tips are incredibly helpful for saving memory- which for my role is often the limiting factor of pandas and my computer. Too much memory load can slow down groupby calls, your computer as a whole and all code, and even worse crash your computer which has happened to me.

Glad this was helpful for you. Sounds like you are working with a lot of data, must be fun!

Working with 24 milion rows of TMY weather data and I totally feel your pain

After watching 70% length of this video, i just stopped the video and mashed the like button. 🔥

What took you so long. 😂

awesome man, gonna save me $$$$ by not upgrading cpu but downsizing code !!!

Glad I could help! Efficient code is important!

Good explanation thank yiu very much 👋👍🙂

Glad it was helpful! Thanks for watching.

Thanks

Welcome

Very nice video, thanks for the tips! If you do an update you could talk about unsigned integers also, like "uint8" for the Age data?

Great suggestion! Thanks for the feedback.

hi,thanks for this video.

Thanks for watching. Hope you found it helpful.

Awesome! I saw in a Video by Matt Harrison that, there is a library which sort of tells which dtypes needs to be converted to do memory saving. Unfortunately, I do not remember exact video. Are you aware of any such library?

Thanks! I don’t know of that library but let me know if you find it.

Why did you use the ‘map’ method over the ‘astype’ when changing the yes/no strings to bool?

Thanks again for this vid.

I think I did it that way because you need to define how to convert the strings to a bool. Astype won’t automatically know to convert those strings unless the we “true” or “false”

thanks. stuff a newb ie me would not think about

This guy is a legend.

He started by using “size” in the random function which he didn’t define.

Please did you have the value of “size” in memory before you started recording?

I was about to ask this same question

Category columns are great, but it's important to set observed = true when doing a group by.

Whoa! I didn’t know about that option. Need to try it next time. Actually would’ve been helpful on yesterdays stream.

Excellent video. Would converting the 'yes/no' to 1 or 0 save as much space as converting them to bool?

Thanks for watching. I believe any int will always take up more memory than a bool. That is because a bool only uses one bit. int8, int16, etc use 8, 16 bits. A bool is essentially an int1

@@robmulla This is not completely true.

In theory a bool needs only 1bit (true/false, 0/1).

In practice CPUs can't address anything smaller than a byte, therefore a bool usually needs 1byte (8bit) of memory just like an int8.

Nevertheless great video, thanks!

@@juliansteden2980 Thanks for clarifying! I stand corrected, that's interesting to know but totally makes sense.

Sorry, would you tell me how the first time you made the table it must have been a 1000 rows? I saying cause it says size = size but I don’t get where is the 1000 size from.

Awesome vids btw!

Great question. I think someone else pointed this out. I think I might have edited out that part of the video but I did end up editing that function to take in the size = 1000 at some point. Check the gist I posted here: gist.github.com/RobMulla/f04b144bb766b692f9314e3782d724d3

3x49x4x2=1176. Glad this comment was in here. Drove me a little nuts not knowing where 1000 came from.

🎯 Key Takeaways for quick navigation:

00:00 📊 *Efficient Memory Use in Pandas Introduction*

- Importance of efficient memory use in pandas for code speed, reduced memory consumption, and storage efficiency.

02:44 📉 *Initial Data Size and Considerations*

- Creating a large dataset (1 million rows) and checking its memory usage.

- Highlighting the impact of increasing data size on performance and memory requirements.

05:27 🧹 *Optimizing Categorical Columns*

- Demonstrating memory reduction by casting categorical columns (position and team).

- Significant reduction in dataset size by utilizing categorical data types.

06:44 🎲 *Downcasting Integer Columns*

- Explaining downcasting of integer columns to smaller types for memory optimization.

- Choosing appropriate integer types based on the data range to avoid information loss.

07:40 📉 *Downcasting Float Columns*

- Downcasting float columns to reduce memory usage while maintaining precision.

- Highlighting the impact of float type selection on data frame size.

09:02 🧹 *Optimizing Boolean Columns*

- Efficiently casting boolean columns for minimal memory usage.

- Using boolean type for binary data representation (win column).

10:23 🔄 *Performance Comparison*

- Comparing computation times before and after applying dtype optimizations.

- Demonstrating the overall improvement in code performance and memory efficiency.

Made with HARPA AI

👏👏👏👏👏

💪

very nice video ^^ just a question... will this help with the browser error "not enough memory" when doing EDA via Jupyter notebook? thanks ^^

Thanks for the comment. It’s probably the cause of the error if you are running out of memory.

Hi Rob, very useful thank you, but how do you deal with the following situation: you have a large MongoDB collection that you want to use locally to develop functions and play with the data. If you import it as a pandas data frame, it is just too large for the PC to handle. What's the best practice in this case? Worth a video tutorial? Thank you

Thanks. Can you aggregate the data in some way before exploring it locally? You could also just get a really large ec2 instance to run it on :D - another option would be something like dask. I have a video about pandas alternatives you should check out.

I know there's a Python module that takes a dataframe and calculates what type transformations one could do on the columns to reduce the size of the frame (it's pretty neat). Can't remember the name of the package now, though... I watched a YT video on it just about yesterday.

Cool! Let me know if you find it. There is a function that I’ve used before from Kaggle that does it.

if you use the astype and round operators on float data, pandas needs to set the signature or leave float64 by default ?

Not sure I totally understand the question. But float precision depends on how precise you need the values to be.

Hey! I recently really enjoy watching your videos. Could you maybe create a video in which you explain how I can run my python scripts automatically online, so that I don't always have to do this manually and with a switched on computer? I am getting a little bit more advancecd through your videos and I'd be super interested in this topic. Cheers! :)

Thanks for watching. That’s a great idea for a video. I think it would differ a lot how you would automate it depending on what you were running. Small program vs a really computational intense process.

@@robmulla Sounds amazing! Thanks for appreciating the idea :)

Question : what is the time complexity of all those cast operations ?

That’s a great question. I don’t know exactly but i haven’t ever come across a time when the cast operation has been an issue.

@@robmulla thx :)

Does saving it in parquet, and then reading it back retains the dtypes? (Dont have a pc with pyarrow installed near me)

Great question. Yes it does!

@@robmulla Great. Another reason to use parquet!

Can you use this astype method if the column contains missing data?

Good question. It depends. Int or bool columns can’t contain null. Floats can.

Isn´t there a library or function to do this? or at least some of the steps?

For example, I made this code which could help anyone. But I bet there are even better options out there:

# 6) Change DTYPES

#if column dtype == float & has no values after the decimal point = change dtype to int:

for col in data1.columns:

if data1[col].dtype == 'float64':

if data1[col].astype(int).equals(data1[col]):

data1[col] = data1[col].astype(int)

#Else: try to reduce float to float 8,16,32,64:

else:

if data1[col].min() >= -128 and data1[col].max() = -32768 and data1[col].max() = -2147483648 and data1[col].max() = -128 and data1[col].max() = -32768 and data1[col].max() = -2147483648 and data1[col].max()

I haven't used it myself but you could also look into this: pypi.org/project/pandas-dtype-efficiency/

Understanding this concept is important beyond just pandas dataframes, but I agree it could be somewhat automated.

This function is good (I've used it on kaggle before) but you just need to be sure you are ok with casting the datatypes automatically, for instance if you expect to have new data added that could be larger or more percise, or if you would not like to automatically cast as categorical then you might not want to do it automatically.

@@robmulla Sory for my late response. Thank you for the answer!. Yes, that package automates a big part of the process. I would add some functionalities to make it even more customizable but it´s great for anyone who wants to check it.

I also agree with you that it´s important to understand the concept. I needed an automation because after your video I use this frequently in large datasets.

@@robmulla Yes, you are right. I usually work with past data that will not be updated in the future so the function comes in handy. But of course, if new data will be added one should cast dtypes carefully.

sir can u plz xplain how we can convert string to catagory without using any inbuit function

I'm not sure what you mean. You can set the dtype to 'category' using .astype('category') read more about it here: pandas.pydata.org/docs/user_guide/categorical.html

@@robmulla thanks for reply sir but lemmi clear my question again. without using astype or any inbuilt method how to convert the dtype to categorical of any column

@@mcdolla7965 not sure that’s possible.

@@robmulla it is possible sir, inside categorical class is being called and two lists are returned but mechanics were too complex to be understood by me, but im sure you will help me out ,plz send me your email id,so that i will send u the github link then you can go through it..nd that would be gr8 content for your channel too..cuz nowhere its available.

Easy way to optimize run time

NameError: name 'size' is not defined

this is what i get from the beggining why ?

Are you sure you wrote the code correctly? It looks like you might have not been running size as a method and instead python thinks it's a variable.

@@robmulla I got same problem too, but I have been following your instruction correctly and still get that error

and the error look like been read from kaggle notebook is variable that not been declare

amazing 38 mb to 7 mb 🤩

Yes 😁 its crazy how much space can be saved!

this was traffic

is 10_000 equivalent to 10000?

Yes! It was added in python 3.6 peps.python.org/pep-0515/

"NameError: name 'size' is not defined"

Team, not "time"! where is size variable init ?

Not sure I get that you mean. Did I misspeak in the video?

Huh!?

If you really want to use Python and run into big bottlenecks, first try moving all things to numpy only...

That’s not always so easy but I agree in some cases it is necessary indeed.

Error in the thumb nail pd.DataFrame

Nice catch!

Perfect!